There is a common misconception in the enterprise world about what constitutes an AI product. Many organizations believe that integrating a machine learning model into an existing workflow qualifies as building an AI product. They add a recommendation engine here, a chatbot there, and claim they have transformed into an "AI-first" company.

This is not an AI product. This is AI as a feature.

The distinction matters more than most leaders realize. After some years of leading data science teams through AI initiatives, I have seen the difference between organizations that treat AI as a feature bolted onto broken processes and those that use AI as the catalyst to fundamentally reimagine how work gets done. The results are not even comparable.

This article is my attempt to clarify what an AI product actually is, what it takes to build one, and why the mindset shift required to get there is the most important factor in whether your AI initiatives succeed or fail.

Defining the AI Product

Here is my working definition: An AI product is not a single model integrated into a process. It is a fundamental rethinking of how a business process operates from start to finish, with AI as the architectural foundation for every step.

The key phrase is "from start to finish." Most AI initiatives fail not because of technical limitations but because they optimize individual steps within fundamentally broken processes. Real AI products step back and ask: if we could rebuild this process from scratch with today's technology, what would it look like?

A Practical Example

Consider a typical enterprise quoting workflow: client emails arrive at a generic inbox, sales agents manually triage and forward to regional teams, data entry specialists type information into a CRM, finance and underwriting teams sequentially review for pricing, and finally a quote gets packaged and sent back. Multiple iterations. Five to ten business days. Countless handoffs and opportunities for errors.

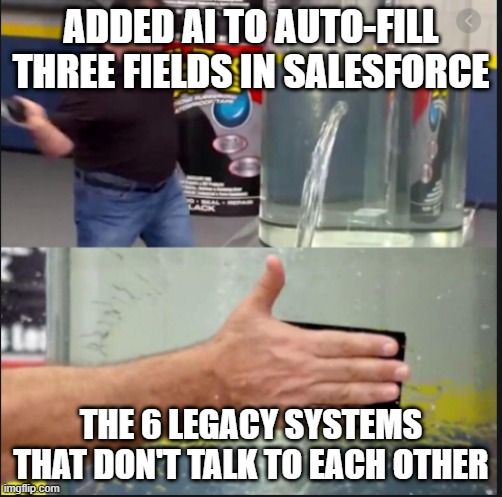

A typical "AI initiative" might add an NLP model to extract data from emails automatically, shaving a day off the timeline. But the fundamental process remains unchanged with all its manual handoffs, siloed systems, and sequential bottlenecks.

An AI product approach works backward from the customer's desired outcome. The customer wants a quote, fast and accurate. They do not care about your internal org chart or legacy systems. So you rebuild the entire process: intelligent multi-channel intake with agentic AI routing, automated entity extraction feeding directly into integrated systems via APIs, instant risk scoring and pricing models, real-time dashboards for visibility, and humans focused only on edge cases requiring judgment.

The result is not a slightly faster version of the old process. It is a fundamentally different way of operating that reduces turnaround from days to hours while improving accuracy and freeing human talent for higher-value work.

The difference between a traditional process and an AI product becomes clear when visualized. The traditional approach involves sequential handoffs, manual interventions at every step, constant delays, and multiple friction points. The AI product approach transforms this into parallel processing with intelligent automation, minimal human intervention (only for edge cases), and dramatic time reduction.

The AI Product Development Lifecycle

Building an AI product requires a structured approach that differs from traditional software development. The lifecycle I have found most effective includes four distinct phases, each with specific outputs and decision points.

Discovery Phase

Discovery is about proving the problem exists and understanding its scope. This is not about writing code beyond simple queries. The goal is validation.

Key activities: - Quantify the current problem with actual data, not anecdotes - Validate assumptions that stakeholders have been voicing in meetings, but not verified in data. - Identify and locate the data required to solve the problem - Assess data quality and accessibility - Provide preliminary cost-benefit analysis for compute, AI usage, storage, external contractors, and data purchases

Critical outputs: - Problem sizing documentation with supporting evidence - Data availability assessment - Preliminary business case with expected ROI range - Stakeholder alignment on problem definition

Many organizations skip this phase because leaders assume everyone already understands the problem. They do not. I have seen countless initiatives stall because different stakeholders had fundamentally different conceptions of what they were trying to solve. Discovery forces alignment before significant investment.

Proof of Concept Phase

POC is where developers put hands on keyboards. But the goal is not to build a scalable product. The goal is to prove that key technical challenges can be overcome.

Key questions to answer: - Can the required data be accessed and integrated? - Do the AI models achieve acceptable performance on real data? - Can the system architecture support the proposed workflow? - What permissions, access, and integrations are required? - What are the edge cases that require human intervention?

Critical outputs: - Working demonstration of core technical feasibility - Documentation of technical constraints and requirements - Identification of integration challenges - Real-world pilot or test-and-learn results - Refined understanding of what "good enough" looks like

Most POCs must be tested in real-world conditions as pilots. Paper analysis cannot reveal the integration challenges, edge cases, and user behavior patterns that only emerge during actual use. This is the true learning phase.

MVP or Minimum Viable Scale Phase

This is the stage after POC where the problem has been proven solvable. A critical point to understand: the only thing you take from POC to MVP is your learnings. The code, the infrastructure, the quick-and-dirty solutions that proved feasibility must be rebuilt with production requirements in mind.

Key activities: - Strip down POC artifacts and identify what must be rebuilt for scale - Design production-ready system architecture - Refine the cost-benefit analysis with real numbers - Build proper AI/ML operations infrastructure - Develop comprehensive testing and monitoring frameworks - Create deployment roadmaps for engineering, data science, and operations teams

Critical outputs: - Scalable, production-ready system - Validated performance at target scale - Finalized budget requirements - Operational runbooks and monitoring dashboards - Training materials for end users

The MVP proves the system can work in the real world at the scale your business requires, within the budget you have identified.

Scale Phase

With a validated MVP, the focus shifts to enterprise-wide deployment, continuous improvement, and expansion to additional use cases. This includes ongoing model monitoring, retraining pipelines, and feedback loops that improve system performance over time.

The Role of Steering Committees and Go/No-Go Decisions

Throughout this lifecycle, a steering committee of key decision makers serves as the governance mechanism. This group must be comfortable with technical details and empowered to make significant decisions for the organization.

The steering committee's responsibilities include:

- Obtaining and allocating funding for initiatives

- Receiving detailed report-outs after each phase

- Making go/no-go decisions at phase gates

- Identifying problems as early as possible in the lifecycle

- Enabling fast pivots when initiatives should be stopped

This last point deserves emphasis. The purpose of phased development with decision gates is to fail early if you are going to fail. Discovering fundamental problems during Discovery costs orders of magnitude less than discovering them after a full production deployment. The steering committee must be willing to kill initiatives that are not working rather than letting sunk cost fallacy drive continued investment.

The Role of AI Product Manager

The role of the AI product manager is to be the connective tissue between all teams involved in building an AI product. This sounds simple but requires a rare combination of capabilities.

The AI product manager must: - Sit with executive leaders to explain vision, make pitches, and secure funding - Turn around and discuss API integration challenges with engineering teams - Translate technical findings into business language for the steering committee - Keep the entire team focused on the end goal for the customer - Inspire confidence when problems feel overwhelming - Resist pressure to reduce scope just to claim a quick win

This last point is particularly important. It is very easy for teams to become distracted or for problems to feel insurmountable. Leaders often fear accountability, so they start reducing scope to put a win on the board. The AI product manager must ensure everyone understands that the product is not a product until the entire end-to-end process achieves its goal for the customer.

AI product managers are not junior or even mid-level contributors. They should be viewed as executive-level change agents who require the same respect and visibility as other executives. The difference is that AI product managers are technical in nature. They are on the ground. They see how the product is built. They understand it deeply.

This aligns with what McKinsey found in their 2025 State of AI research: organizations with high AI performance are significantly more likely to report that senior leaders demonstrate ownership and commitment to AI initiatives. The AI product manager embodies this leadership at the working level.

Where Does AI Product Fit In?

AI Product operates as a sister team alongside App Development, Data Science, Data Engineering, Cloud Engineering, and Business Units. The key difference is the matrix structure: Product managers pull resources from functional teams to assemble cross-functional product teams focused on specific AI products.

This structure is opposed to traditional IT organizations where work goes into each vertical individually and teams must figure out how to coordinate on their own. The cross-functional team reports through a steering committee to executive leadership. This consolidates decision-making and significantly reduces the number of leaders who must be constantly aligned to every initiative. Instead of coordinating across multiple department heads for each decision, the steering committee becomes the single point of governance.

This structure maintains deep technical expertise from functional teams while keeping initiatives aligned with business objectives and moving quickly.

Common Issues and How to Navigate Them

Building AI products surfaces organizational challenges that go beyond technical complexity. Being prepared for these issues is essential.

The Probabilistic Nature of AI Systems

Perhaps the most significant cultural challenge is that IT leaders and engineers are deeply uncomfortable with the probabilistic nature of AI systems. Traditional software is deterministic. Input A produces output B, every time. AI systems produce varying results based on context, and some percentage of outputs will be wrong.

This causes real friction. Middle managers unfamiliar with these systems can become hyper-fixated on eradicating any source of error. Conversations get stuck on edge cases and failure modes. The instinct to demand zero defects is understandable but fundamentally misaligned with how AI works.

The solution is human-in-the-loop (HITL) design. Any probabilistic output that cannot be reduced to acceptable error levels must go through human review. The critical insight is that acceptable error means error rates comparable to the human process being replaced. If humans currently make mistakes on 5% of cases, demanding that AI achieve 0.1% error before deployment is not a reasonable standard.

HITL interfaces require a complete rethink of UI design. The original system was designed for manual input. The new interface is about review and reducing review time as much as possible. This is a 50/50 tradeoff: the model must be as accurate as possible to maximize automation rate, and the software experience must be streamlined so human reviewers can adjudicate edge cases quickly.

Accountability Displacement

Because AI products are unfamiliar territory for most teams, there is a natural tendency to push all accountability onto the AI product manager and data science teams. When errors occur in the system, engineering and business teams will point fingers at "the AI" rather than accepting shared responsibility.

This must be identified and addressed early in the product lifecycle. AI products are built by cross-functional teams. Everyone is accountable for success. Establishing this expectation at the outset prevents corrosive blame dynamics from derailing projects later.

Scope Creep and the "Perfect is the Enemy of Good" Trap

Teams often expand requirements mid-project, adding features or demanding capabilities that were never part of the original business case. Alternatively, they become paralyzed by the pursuit of perfection, unable to ship until every conceivable edge case is handled.

The AI product manager must maintain relentless focus on the original objective. The question is always: does this addition move us closer to the goal we defined for the customer? If not, it goes in the backlog for future iterations, not the current release.

Data Quality and Availability Challenges

Every AI initiative eventually confronts the reality that enterprise data is messier, more fragmented, and less accessible than anyone anticipated. Data that "exists" may not be available in usable form. Systems that should integrate do not. Historical data has inconsistencies that invalidate assumptions.

This is why the Discovery phase is so important. Surface these issues before committing significant resources. Build realistic timelines that account for data remediation. Do not assume data problems will magically resolve themselves.

Change Management and User Adoption

Even technically successful AI products can fail if users do not adopt them. This requires deliberate change management. As BCG research indicates, roughly 70% of challenges in AI projects stem from people and process issues rather than technical ones.

Users need to understand how the new system benefits them, not just the organization. They need training and support during the transition. They need channels to provide feedback and report issues. Neglecting the human side of AI transformation virtually guarantees disappointing results.

Integration with Legacy Systems

Enterprise AI products rarely exist in isolation. They must connect to CRMs, ERPs, data warehouses, and custom applications that may be decades old. These integrations are consistently more difficult and time-consuming than anticipated.

Budget significant time and resources for integration work. Identify integration requirements during Discovery. Test integrations thoroughly during POC. Do not assume that "the API will just work."

What the Industry Gets Right and Where My Experience Goes Deeper

The industry consensus on AI products is directionally correct. Enterprise AI does require integration across systems. It does need to address business challenges. It does involve automation and improved decision-making.

Where my experience goes deeper is in the emphasis on complete process transformation rather than point solution deployment. The industry talks about AI transformation, but many implementations still focus on individual use cases or departmental solutions.

A true AI product rethinks the entire customer journey. It asks not "where can we add AI?" but "what should this process look like if we designed it today with no legacy constraints?" This is harder. It requires more organizational change. It takes longer to deliver initial results.

But the payoff is transformational rather than incremental. You do not get 10% efficiency gains. You get 80% reductions in cycle time. You do not slightly improve customer experience. You fundamentally change what is possible.

This aligns with Bain's finding that "ROI comes from reimagining how work gets done and how a company competes." The organizations capturing real value from AI are those willing to redesign business processes with AI at the core rather than layering AI onto existing operations.

The Path Forward

If you are a data science leader, an AI product manager, or an executive responsible for AI initiatives, I want to leave you with several takeaways.

Resist the temptation to move fast by skipping Discovery. The pressure to show quick wins leads organizations to jump directly into building without validating that they are solving the right problem. This creates expensive failures that could have been avoided with a few weeks of rigorous analysis.

Think in terms of customer outcomes, not technical capabilities. The customer does not care about your models, your architecture, or your org chart. They care about getting what they need quickly and accurately. Work backward from that outcome.

Accept that AI products require organizational change. You cannot transform processes without transforming how people work. Budget time and resources for change management. Involve stakeholders early and often.

Build for humans and machines to work together. The most effective AI products are not fully autonomous. They combine AI capabilities with human judgment in thoughtful ways. Design HITL interfaces that make human review efficient rather than treating it as a failure mode.

Maintain focus on the end goal. The AI product manager's most important job is preventing scope drift, premature optimization, and the temptation to declare victory before the full process transformation is complete.

Fail early if you are going to fail. The phased approach with steering committee decision gates exists to kill initiatives that are not working before they consume significant resources. Use it.

The opportunity in AI products is enormous. Organizations that master this approach will operate at speeds and quality levels that their competitors cannot match. But capturing that opportunity requires more than technical capability. It requires the courage to question everything about how work currently gets done and the discipline to rebuild from first principles.

The technology is ready. The question is whether your organization is ready to truly transform.

What challenges have you faced in building AI products? What organizational barriers have been most difficult to overcome? I would appreciate hearing your experiences in the comments.

Further Reading

For those interested in exploring AI product management and transformation further, I recommend:

Marily Nika's AI Product Academy - One of the leading resources for AI product managers, covering everything from fundamentals to advanced implementation strategies.

The Unique Challenges of AI Product Management - A thoughtful exploration of how AI product management differs from traditional product management.

Debunking the Myths of AI and Product Management - Practical perspectives on how AI tools can enhance product management workflows.

McKinsey's State of AI 2025 Report - Comprehensive research on AI adoption trends and success factors across industries.

Bain & Company's Unsticking Your AI Transformation - Insights on why most organizations remain stuck in AI experimentation and how to move toward real transformation.